TL;DR

Ever since LLMs hit the mainstream, attention has focused on the model: GPT-5, Claude, Gemini, Qwen. But in production, the real lever for reliability is no longer the model — it's everything around it. Context, tools, workflow, validation, guardrails, observability. We call that the harness, and designing it is a discipline of its own: Harness Engineering.

At VOID, we built our first harness as a 100% on-premise stack to automate security updates, tested on open-source code and our own internal projects. Stack: Autogit (our internal VOID orchestration tool, being open-sourced), Ollama serving Qwen3.5-27B, all running on an Nvidia RTX PRO 6000. This hands-on experience reminded us of a simple truth: without an automated feedback loop, an AI agent has no business in production.

“Early progress was slower than we expected, not because Codex was incapable, but because the environment was underspecified. The agent lacked the tools, abstractions, and internal structure required to make progress toward high-level goals. The primary job of our engineering team became enabling the agents to do useful work.”

1. The "we plugged in GPT, it'll work" trap

Everyone has seen this scenario. A company wires an LLM into their information system. Early demos are thrilling. Ship to production. Suddenly, the agent starts hallucinating references, ignoring critical instructions ("never touch the prod database"), doing the exact opposite of what was asked.

Usual diagnosis: "the model isn't good enough, let's wait for the next generation." Wrong. 90% of the time, it wasn't a model problem. It was a harness problem.

The most common mistake

Believing that an LLM, shipped alone, can honor commitments in production. A raw model has no persistent memory, no guardrails, no vetted tools, no recovery loop. It improvises. In production, improvising is not an option.

2. What is a harness in AI?

The word harness — literally a riding or climbing harness — refers in AI engineering to everything around the model that makes it useful and reliable. The LLM is the engine. The harness is the chassis, the steering, the brakes, the dashboard.

Components of a harness

Several consumer products we use every day are actually harnesses wrapped around one or more LLMs:

- Cursor / Claude Code: a harness for coding

- Devin (Cognition): a harness for autonomous development

- Perplexity: a harness for web search

- Harvey: a harness for the legal industry

- GitHub Copilot: a harness for IDE autocomplete

So the question we ask in Harness Engineering isn't "which model should we pick?", but "what environment should we design around the model so it handles the task reliably, traceably, and within governance?"

3. Clarifying the vocabulary

The field moves fast and several terms circulate, often conflated. To frame the discussion, here are the useful distinctions:

| Term | What it refers to | Popularized by |

|---|---|---|

| Harness / AI Harness | The software infrastructure around the LLM (tools, memory, validation, workflow) | Anthropic, Cursor, Devin |

| Context Engineering | The discipline of designing what we feed the agent (context, docs, specs) | Lütke · Karpathy · Willison (June 2025) — formalized by Anthropic (Sep. 2025) |

| Agent / Agentic Engineering | Designing the full workflow of the agent (plan, exec, verify) | Open-source community |

| Prompt Engineering | (older) writing the right prompts — still useful, but insufficient | 2023 |

| Steering | Guiding / constraining the model to follow directives, even when it tends to forget them | Anthropic / OpenAI research |

| Scaffolding | The "rails" placed around the agent to channel its behavior | Academic papers |

| Skills / Tool use | The capabilities given to the agent (exposed functions, MCP, tools) | OpenAI, Anthropic, MCP |

Harness Engineering glossary

- What it refers to

- The software infrastructure around the LLM (tools, memory, validation, workflow)

- Popularized by

- Anthropic, Cursor, Devin

- What it refers to

- The discipline of designing what we feed the agent (context, docs, specs)

- Popularized by

- Lütke · Karpathy · Willison (June 2025) — formalized by Anthropic (Sep. 2025)

- What it refers to

- Designing the full workflow of the agent (plan, exec, verify)

- Popularized by

- Open-source community

- What it refers to

- (older) writing the right prompts — still useful, but insufficient

- Popularized by

- 2023

- What it refers to

- Guiding / constraining the model to follow directives, even when it tends to forget them

- Popularized by

- Anthropic / OpenAI research

- What it refers to

- The "rails" placed around the agent to channel its behavior

- Popularized by

- Academic papers

- What it refers to

- The capabilities given to the agent (exposed functions, MCP, tools)

- Popularized by

- OpenAI, Anthropic, MCP

In what follows, we use "Harness Engineering" as the umbrella term. All others are sub-disciplines or techniques that attach to it.

4. The 6 pillars of a good harness

Building a harness isn't about stacking tools. It's about addressing six distinct concerns, and none of them are optional in production.

Context Engineering — manage the context, not just write it

Term popularized in June 2025 by Tobi Lütke (Shopify CEO), amplified by Andrej Karpathy, then crystallized by Simon Willison, and formalized by Anthropic in September 2025 as "the natural progression of prompt engineering." You stop writing a single good prompt and start actively managing the context across a long session. In practice: memory (what do we keep between turns?), compacting & summarization (what's essential to carry over?), preventing context rot — that slow rot when you pile up unused tokens — via micro-compacting, and regularly cleaning the tools exposed to the agent. Without this, even the best business docs and a well-indexed RAG end up drowning the agent in its own context.

Principle: a clean context beats a large context. Garbage in, garbage out — to the power of 10.

Skills & Tools — right tools, tight perimeter

Exposed functions, internal APIs, CLI commands, scoped read/write access to a specific perimeter — ideally inside a dedicated Docker sandbox to contain any destructive action. The Model Context Protocol (MCP) is emerging as the standard for connecting agents to tools — use it wisely: every wired-in MCP server is also a token burner (tool descriptions, schemas, metadata silently eat into your context window).

Principle: the agent can only do what we explicitly allow it to do, in an environment we can throw away.

Workflow — orchestrating the steps

Planning → execution → verification → recovery. Patterns that work: ReAct, Chain-of-Thought, Tree-of-Thoughts, agentic loops. For complex tasks, we decompose into multiple agents (planner, executor, critic).

Principle: an agent without a workflow drifts.

Validation loops — verify before commit

Self-critique, automated evals, human-in-the-loop on critical actions. This is where automated tests (unit, integration, E2E Playwright) truly earn their keep.

Principle: an agent that checks its own work beats a bigger agent.

Steering & Guardrails — holding the line

This is the answer to the frustrating question: "Why is the agent doing the opposite of what I asked?" Techniques: reinforced system prompts, constitutional AI, strict output parsing, business rules outside the LLM, and above all a strict CI pipeline the agent can't bypass: quality gates (Sonar Way), security (Snyk, Trivy, SAST), plus live feedback on the agent side — lint, type-check and quick checks running continuously, so issues get fixed in-flight rather than at the end of a long run.

Anti-pattern: putting everything in the prompt and hoping it holds. Critical constraints must live in deterministic code and in CI — not in a system prompt.

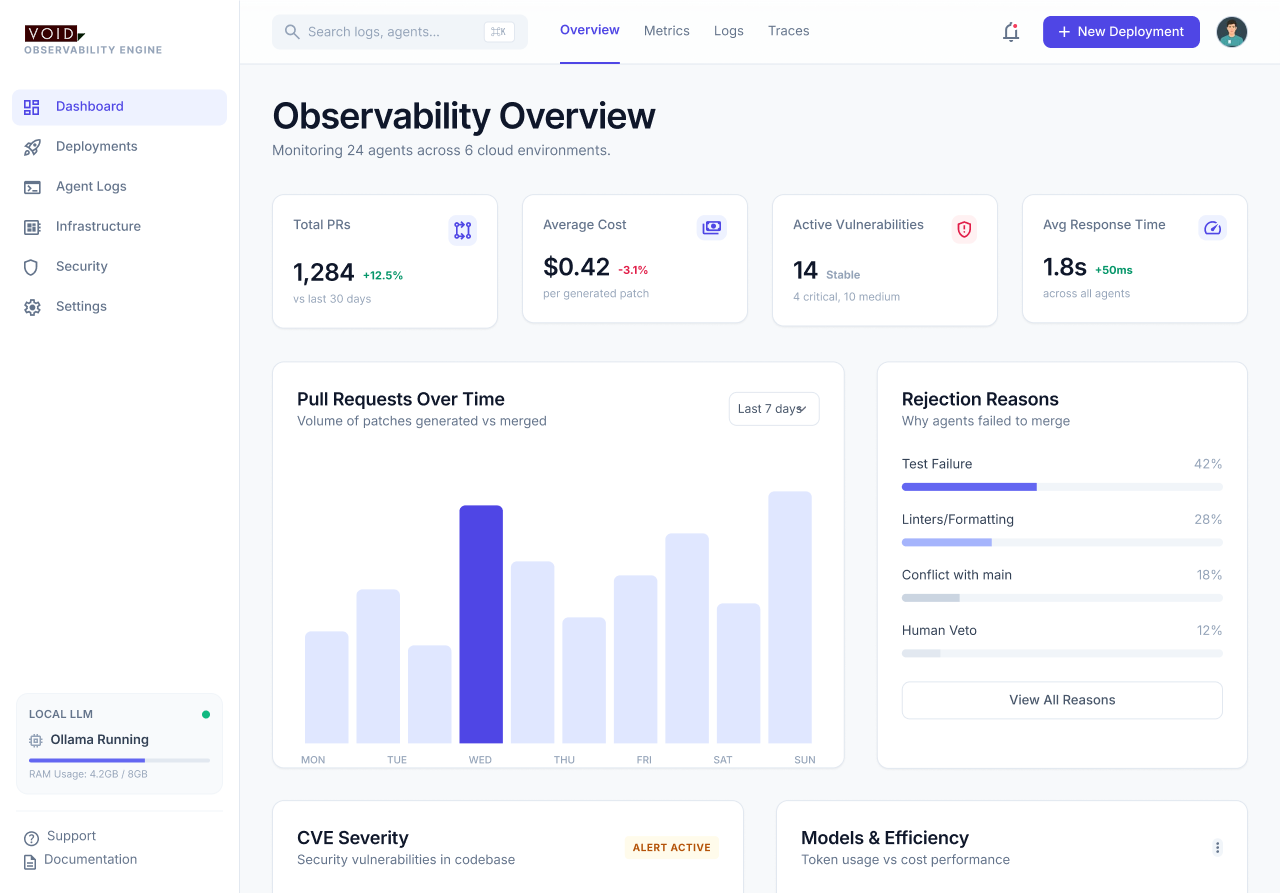

Observability — know what the agent does, and improve it

Traces (LangSmith, Langfuse, Arize), tool-call logs, baseline metrics: success rate, hallucination rate, cost per task. But most importantly: plan for improvement from day one. Is it slow? Which tool call is your worst performer? Is it network latency, prompt processing, token generation? Why did the agent get this wrong — missing skill? missing context? missing tool? You need to collect every signal you can to feed a self-improvement loop — ideally letting another model (larger, or a judge) mine the traces and suggest harness-level fixes.

Principle: you can't manage what you don't measure, and you can't improve what you didn't instrument from day one.

Context Engineering — manage the context, not just write it

Term popularized in June 2025 by Tobi Lütke (Shopify CEO), amplified by Andrej Karpathy, then crystallized by Simon Willison, and formalized by Anthropic in September 2025 as "the natural progression of prompt engineering." You stop writing a single good prompt and start actively managing the context across a long session. In practice: memory (what do we keep between turns?), compacting & summarization (what's essential to carry over?), preventing context rot — that slow rot when you pile up unused tokens — via micro-compacting, and regularly cleaning the tools exposed to the agent. Without this, even the best business docs and a well-indexed RAG end up drowning the agent in its own context.

Principle: a clean context beats a large context. Garbage in, garbage out — to the power of 10.

Skills & Tools — right tools, tight perimeter

Exposed functions, internal APIs, CLI commands, scoped read/write access to a specific perimeter — ideally inside a dedicated Docker sandbox to contain any destructive action. The Model Context Protocol (MCP) is emerging as the standard for connecting agents to tools — use it wisely: every wired-in MCP server is also a token burner (tool descriptions, schemas, metadata silently eat into your context window).

Principle: the agent can only do what we explicitly allow it to do, in an environment we can throw away.

Workflow — orchestrating the steps

Planning → execution → verification → recovery. Patterns that work: ReAct, Chain-of-Thought, Tree-of-Thoughts, agentic loops. For complex tasks, we decompose into multiple agents (planner, executor, critic).

Principle: an agent without a workflow drifts.

Validation loops — verify before commit

Self-critique, automated evals, human-in-the-loop on critical actions. This is where automated tests (unit, integration, E2E Playwright) truly earn their keep.

Principle: an agent that checks its own work beats a bigger agent.

Steering & Guardrails — holding the line

This is the answer to the frustrating question: "Why is the agent doing the opposite of what I asked?" Techniques: reinforced system prompts, constitutional AI, strict output parsing, business rules outside the LLM, and above all a strict CI pipeline the agent can't bypass: quality gates (Sonar Way), security (Snyk, Trivy, SAST), plus live feedback on the agent side — lint, type-check and quick checks running continuously, so issues get fixed in-flight rather than at the end of a long run.

Anti-pattern: putting everything in the prompt and hoping it holds. Critical constraints must live in deterministic code and in CI — not in a system prompt.

Observability — know what the agent does, and improve it

Traces (LangSmith, Langfuse, Arize), tool-call logs, baseline metrics: success rate, hallucination rate, cost per task. But most importantly: plan for improvement from day one. Is it slow? Which tool call is your worst performer? Is it network latency, prompt processing, token generation? Why did the agent get this wrong — missing skill? missing context? missing tool? You need to collect every signal you can to feed a self-improvement loop — ideally letting another model (larger, or a judge) mine the traces and suggest harness-level fixes.

Principle: you can't manage what you don't measure, and you can't improve what you didn't instrument from day one.

Deterministic vs non-deterministic

The line between what we delegate to the LLM and what must stay in classical code.

Always the same output

Same input, same result. Reproducible, auditable, testable.

Business code, SQL rules, classical APIs, unit tests, guardrails.

Variable output

Same input, result may vary. Creative, great for language, but unpredictable.

LLMs (GPT, Claude, Qwen), image generation, summaries, reasoning.

Always the same output

Same input, same result. Reproducible, auditable, testable.

Business code, SQL rules, classical APIs, unit tests, guardrails.

Variable output

Same input, result may vary. Creative, great for language, but unpredictable.

LLMs (GPT, Claude, Qwen), image generation, summaries, reasoning.

Example: a banking refund rule

Rule: "No refund > MAD 5,000 without human escalation."

Rule in the prompt:

"Never approve > MAD 5,000 without human escalation."

→ One day, the LLM approves MAD 8,000. Rare, but it will happen. And 1% of cases = disaster.

Rule in the code:

if (amount > 5000) {

escalateToHuman();

}

→ Impossible to bypass. Code never drifts.

Rule in the prompt:

"Never approve > MAD 5,000 without human escalation."

→ One day, the LLM approves MAD 8,000. Rare, but it will happen. And 1% of cases = disaster.

Rule in the code:

if (amount > 5000) {

escalateToHuman();

}

→ Impossible to bypass. Code never drifts.

The golden rule of the Harness

Critical → deterministic. Creative → non-deterministic. The harness draws the line.

5. The 3 traps that kill AI agent projects

"The model is smart enough, no workflow needed"

Wrong. Even GPT-5 or Claude Opus drift without scaffolding. The more sensitive the task, the more critical the workflow.

"We'll put all the rules in the prompt"

The prompt is non-deterministic: it drifts. Critical constraints (security, amounts, permissions) must live outside the LLM, in deterministic code that frames it. That's the golden rule.

"We'll evaluate it in production"

Too late. You need an evals suite before production — nominal, edge, and adversarial cases — combined with a human-in-the-loop on sensitive actions and an LLM-as-a-judge (with an explicit bias / rubric) to iterate quickly on quality regressions.

6. VOID's case study: a 100% sovereign, fleet-wide remediation agent

The lesson we want everyone to remember

An AI agent that fixes code but can't test its own work is worse than no agent. Before shipping an AI agent to a project, the priority isn't the model, isn't the orchestration framework, isn't the prompts.

The priority is the feedback loop: unit tests, integration tests, E2E Playwright tests. At VOID, we flipped our approach — we frame and level up the automated test harness before talking about an AI agent at all.

The context

On our DevOps and AWS managed-services engagements, we keep seeing the same reality: security debt piles up faster than it gets paid down. CVEs on NPM / Python / Java dependencies, OS patches, container vulnerabilities. Dozens of alerts every week. Product teams push features, and the debt grows. And this pain isn't limited to CVEs — the same mechanics apply to code propagation (rolling an API change across an entire repo), dependency management at scale, and fleet-wide remediation when the same fix needs to land across dozens — or hundreds — of projects.

10:47 PM: a critical CVE drops on a dependency used by 100 internal projects. Without an agent, that's three days of cross-team coordination warfare.

7:30 AM the next morning: the agent has opened 100 PRs, each with the fix, green tests, a link to an ephemeral test environment, and a Slack notification to the repo owner. Humans are left with what matters: the review and the merge decision.

Many regulated environments — typically banks, insurers, government — enforce a non-negotiable constraint: code must never leave the infrastructure. That rules out SaaS offerings like Copilot, Cursor or Dependabot Premium. You need a sovereign AI agent, running entirely on-premise.

So we set out to validate the concept end-to-end before any rollout. We ran the pilot on open-source code and on our own internal VOID projects, never on client code. Goal: prove feasibility and measure the limits of a sovereign AI agent that detects vulnerabilities, fixes the code, runs the tests, opens a PR — all without a single byte leaving the infra.

What follows is the raw case study from this pilot: the technical choices, the results, and — most importantly — the lessons we now apply to every project.

The 100% on-premise stack

After several iterations, we converged on a fully self-hosted architecture:

| Component | Choice | Role |

|---|---|---|

| Orchestration | Autogit (VOID internal tool, self-hosted, being open-sourced) | CVE detection, workflow steering, Git interaction |

| Inference server | vLLM (prod) / Ollama (dev) | Serves the model locally — vLLM for prod throughput, Ollama to iterate |

| Model | Qwen3.5-27B | Code reasoning, fix generation |

| Hardware | Nvidia RTX PRO 6000 (Blackwell) | Pro-grade card, 96 GB VRAM, ideal for a 27B model locally |

| Vulnerability scan | Trivy / Snyk CLI | CVE detection + SAST |

| E2E tests | Playwright + existing unit/integration suite | Feedback loop — no PR without green tests |

| Sandbox | Docker sandboxes / sandboxagent.dev | Isolated, throw-away environment — the agent executes commands without risking the host |

| Git platform | GitHub / GitLab API | PR creation + review comments |

100% on-premise technical stack

- Role

- CVE detection, workflow steering, Git interaction

- Choice

- vLLM (prod) / Ollama (dev)

- Role

- Serves the model locally — vLLM for prod throughput, Ollama to iterate

- Choice

- Qwen3.5-27B

- Role

- Code reasoning, fix generation

- Choice

- Nvidia RTX PRO 6000 (Blackwell)

- Role

- Pro-grade card, 96 GB VRAM, ideal for a 27B model locally

- Choice

- Trivy / Snyk CLI

- Role

- CVE detection + SAST

- Choice

- Playwright + existing unit/integration suite

- Role

- Feedback loop — no PR without green tests

- Choice

- Docker sandboxes / sandboxagent.dev

- Role

- Isolated, throw-away environment — the agent executes commands without risking the host

- Choice

- GitHub / GitLab API

- Role

- PR creation + review comments

The key benefit: total sovereignty

No outbound calls. No third-party LLM API. No data exfiltration. Code never leaves its home environment. End-to-end auditable by a CISO, ready to be replicated inside regulated infrastructures.

The harness architecture

| Pillar | Implementation |

|---|---|

| Context | Full repo + PR history + internal docs |

| Tools | Git, Trivy/Snyk, test runner, GitHub/GitLab API |

| Workflow | Detect CVE → localize code → draft fix → run tests → create PR |

| Validation | Unit + integration + E2E Playwright tests. No PR if tests are red |

| Guardrails | Dedicated branch. Never auto-merge. Mandatory human review |

| Observability | Logs on every run, PR acceptance rate, average time-to-fix |

Harness architecture mapped to the 6 pillars

- Implementation

- Full repo + PR history + internal docs

- Implementation

- Git, Trivy/Snyk, test runner, GitHub/GitLab API

- Implementation

- Detect CVE → localize code → draft fix → run tests → create PR

- Implementation

- Unit + integration + E2E Playwright tests. No PR if tests are red

- Implementation

- Dedicated branch. Never auto-merge. Mandatory human review

- Implementation

- Logs on every run, PR acceptance rate, average time-to-fix

What worked

- ✓Trivial fixes (patch version bumps, minor updates, well-documented CVEs): excellent results. Qwen3.5-27B running locally handles them perfectly.

- ✓Non-regression tests generated by the agent on the functions touched by the update. Not perfect, but often better than "nothing".

- ✓Clear PR documentation (CVE referenced, scope of the fix, tests added). Massive time savings for the reviewer.

- ✓Sovereignty: zero data sent to a third party. Stack fully auditable by a CISO.

What didn't work as well

- ⚠Breaking changes between majors: in pure autonomy, the agent tries to blindly fix a v2 → v3 and breaks the build — it doesn't grasp the business impact. Our answer wasn't to ban majors, but to specialize the harness: dedicated Autogit actions with enriched prompts (official migration guide scraped, changelog, API diff, impact checklist) steer the agent through those cases. Slower workflow, mandatory human review. "Light autonomous" mode stays reserved for minors and patches. That's exactly the article's message: we don't change the model, we adapt the harness to the risk level.

- ⚠Complex transitive dependencies (deeply nested NPM peer dependencies): the agent gets lost, can't trace the chain when an update breaks another dependency indirectly.

- ⚠Autonomous generation of Playwright E2E tests: nope. The agent can run the existing Playwright suite and use it as a validation signal, but it can't write a relevant E2E scenario on its own (stable selectors, data-testid, business assertions). Playwright tests stay written in co-pilot mode (developer + agent, Cursor / Copilot style), not autonomously. Exactly why we audit and level up the test harness before shipping the autonomous agent.

- ⚠Qwen3.5-27B vs a frontier model: on pure reasoning, you feel the gap with GPT-5 or Claude Opus. But for this specific use case (repetitive patterns, localized code, tests as a signal), Qwen3.5-27B is more than enough. And that's the price of sovereignty. Qwen3.6-27B — shipped on April 22, 2026 on Hugging Face — narrows that gap on public agentic benchmarks (SWE-bench Verified 77.2%). We run it on the same harness, no rebuild, with very encouraging early signals.

The 6 lessons we learned

No automated feedback loop, no production

After our early tests, a brutal realization: an agent that fixes code but can't verify it works is an agent that plants time bombs. The only variable that makes the difference between a cute pilot and a real deployment is the quality of the feedback loop — unit, integration, and above all E2E automation (Playwright, Cypress). Our rule now: before even talking about an AI agent, we audit and level up the test harness.

Scope is everything

We initially wanted the agent to cover "all updates". Failure. By narrowing to minor / patch and documented CVEs, we hit a high acceptance rate and a trust relationship with reviewers.

The test is the real value

An agent that proposes a fix without testing it is Dependabot. An agent that runs the tests before pushing the PR is a real teammate. The validation loop is what separates a tool from an agent.

Never auto-merge

Technically, we could auto-merge PRs whose tests are green. We don't. A human always validates. It's a governance choice, not a technical limit.

Sovereignty = architecture, not marketing

When a regulatory context says "data cannot leave", you don't solve that with an NDA. You solve it with architecture. vLLM + Qwen + Autogit open-source on a local GPU = a concrete, auditable, defensible answer.

Observability is not optional

Every week we measure: PRs proposed, PRs merged, PRs rejected, rejection reasons. Without that, there's no way to tell whether the agent is improving or whether the harness is drifting.

7. What does a harness stack look like in 2026?

The tooling landscape moves fast. Here's what we recommend today, based on the need:

| Need | Tools |

|---|---|

| Agent orchestration | LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, Autogit |

| Tool protocol | MCP (Model Context Protocol) — the emerging standard |

| Local model server | vLLM (prod, serious throughput), Ollama (dev / POC), TGI |

| Sandbox / isolated execution | Docker sandboxes, sandboxagent.dev, E2B |

| Open-source models | Qwen3.5 / Qwen 3.6 (stronger agentic promise), Llama 3.3, Mistral, DeepSeek |

| Feedback loop (tests) | Playwright, Cypress, Vitest, Jest, Pytest, JUnit |

| Evals | Braintrust, Langfuse, Promptfoo |

| Observability | LangSmith, Arize, Helicone, Langfuse |

| Guardrails | Guardrails.ai, NeMo Guardrails |

| RAG | LlamaIndex, Haystack, Vectara |

Recommended harness stack in 2026

- Tools

- LangGraph, CrewAI, AutoGen, OpenAI Agents SDK, Autogit

- Tools

- MCP (Model Context Protocol) — the emerging standard

- Tools

- vLLM (prod, serious throughput), Ollama (dev / POC), TGI

- Tools

- Qwen3.5 / Qwen 3.6 (stronger agentic promise), Llama 3.3, Mistral, DeepSeek

- Tools

- Playwright, Cypress, Vitest, Jest, Pytest, JUnit

- Tools

- Braintrust, Langfuse, Promptfoo

- Tools

- LangSmith, Arize, Helicone, Langfuse

- Tools

- Guardrails.ai, NeMo Guardrails

- Tools

- LlamaIndex, Haystack, Vectara

8. Key takeaways

- →The model is no longer the bottleneck. The harness is.

- →Building an AI agent in production = 20% prompt, 80% engineering around it.

- →Failed AI projects are almost always harness problems, not model problems.

- →Without an automated feedback loop (unit tests + E2E Playwright), an AI agent has no business in production.

- →Sovereignty isn't a marketing option. It's an architecture — and it's attainable with an open-source stack.

- →Golden rule: what's critical must be deterministic, what's creative can be non-deterministic. The harness draws the line.

Did you know? Harness fun facts

Three details people often overlook — but they say a lot about a harness's maturity.

“Switch to planning mode” is a KPI

When Cursor or Claude Code suggest you switch to planning mode, they measure the time between the suggestion and your click. It's a cognitive-friction metric: the longer you take, the further the agent had drifted. Worth tracking in any internal harness.

“Continue” reveals when the agent is tiring

Every time you type “continue” to an agent, that's a signal. Counted across a session, those prompts let you pinpoint when the agent starts to stall, lose the thread, or spin — usually after N tool calls or past a given context volume. A great proxy for context rot and skill limits.

AI agent benchmarks are a direction, not truth

A good number of the industry's most popular AI-agent benchmarks are fundamentally outdated or biased — a textbook case of Goodhart's law: “when a measure becomes a target, it ceases to be a good measure”. Worth reading: Berkeley RDI — Trustworthy Benchmarks. Use them as a compass, not a scoreboard.

“Switch to planning mode” is a KPI

When Cursor or Claude Code suggest you switch to planning mode, they measure the time between the suggestion and your click. It's a cognitive-friction metric: the longer you take, the further the agent had drifted. Worth tracking in any internal harness.

“Continue” reveals when the agent is tiring

Every time you type “continue” to an agent, that's a signal. Counted across a session, those prompts let you pinpoint when the agent starts to stall, lose the thread, or spin — usually after N tool calls or past a given context volume. A great proxy for context rot and skill limits.

AI agent benchmarks are a direction, not truth

A good number of the industry's most popular AI-agent benchmarks are fundamentally outdated or biased — a textbook case of Goodhart's law: “when a measure becomes a target, it ceases to be a good measure”. Worth reading: Berkeley RDI — Trustworthy Benchmarks. Use them as a compass, not a scoreboard.

Want to explore an AI agent in your delivery?

At VOID, we always start with the feedback loop. Three entry points, depending on your maturity:

Testability audit

Assessment of coverage and quality of your automated tests.

Test harness uplift

Installing the Playwright / unit / integration suite that will enable the AI agent.

AI agent framing

Once tests are in place, we design the agentic scaffolding — sovereign if needed.

Found this article useful?

Share it with your network — especially teams about to ship an AI agent to production.